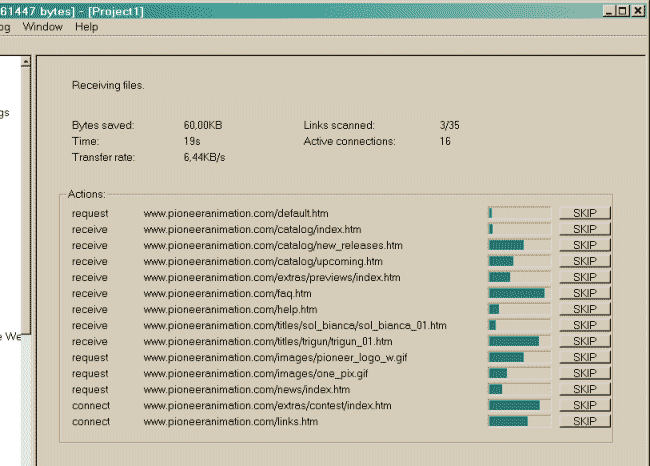

HTTrack Website Copier essentially works just as its name suggests: give the program a URL, and it'll try to download the entire website so you have your own local copy, perfect if you'd like to refer to the site while offline.

Obviously you have to be, well, careful in the URLs you provide. It might be convenient to have a local copy of Wikipedia, for instance, but you probably don't have enough bandwidth to download every single page from the site. Initially you might be better off using the program to, say, make a local copy of a personal or business site; you'll then end up with all the various HTML pages saved on disc, so it's easy to present them to others, even if you're not currently connected to the web.

Of course sometimes you may only want to download sections of a site, and here exploring HTTrack's option dialog may be of assistance. Click Set Options > Limits when you get the chance, and you'll find you can for instance set the maximum size of HTML and non-HTML files, as well as the maximum number of links to follow.

Otherwise, though, the program is very straightforward to use: just follow the setup wizard, point it at the domain, and before you know it HTTrack will be downloading the first page, following links to other pages on the same site, downloading those as well, and so on. If you need an offline copy of a website then the program will produce one just as soon as your download speeds will allow.

Version 3.48-22 brings:

+ Fixed: on Windows, fixed possible DLL local injection (Tunisian Cyber)

+ Fixed: various typos

Verdict:

An easy-to-use and highly configurable offline browser

Your Comments & Opinion

The latest stable Chrome Portable includes a number of under-the-hood improvements

See how a website looks in various devices

Speed up your online research by easily saving pages of interest

Download complete websites to your desktop

Batch download music, images, videos and more from websites

Take control of your Gmail inbox with a Firefox extension

Take and edit screen grabs from just about any Windows application

Take and edit screen grabs from just about any Windows application

Get your life in order with this customisable scheduler

Keep an eye on the health of all of your hard drives with this free tool

A useful hard drive benchmark